On-Device AI Is Eating the Cloud (And Your Phone's Battery)

I've been tinkering with on-device AI models for the past few months, and honestly? It's wild how fast things are moving. We went from "AI needs a data center" to "AI runs on your phone" in what feels like the blink of an eye.

But here's the part nobody wants to talk about: your phone gets really, really hot when you do it.

Let me explain why on-device AI is a game-changer for developers, why I think we're headed for a new app gold rush, and why there's a catch that the hype crowd keeps glossing over.

Wait, AI Runs on My Phone Now?

Yep. And not just simple stuff like autocorrect or face detection. We're talking actual language models, real-time translation, and intelligent assistants running entirely on the chip inside your phone.

Apple's Neural Engine (on A18-series chips), Google's Tensor G4, and Qualcomm's Snapdragon AI Engine have all gotten good enough that you can run meaningful AI inference without ever pinging a server. Major AI labs have released models specifically designed for this: Llama 3.2 (1B and 3B parameter versions), Google's Gemma 3 (down to 270 million parameters), Microsoft's Phi-4 mini, and several others.

For the non-technical crowd: these are smaller, optimized versions of the big AI models you've heard about. They're designed to fit inside a phone's memory and run on its processor instead of in the cloud.

Why This Is a Game-Changer for Developers

Three words: speed, privacy, and offline.

Speed. On-device inference means sub-millisecond response times. No network round trip, no waiting for a server to spin up, no latency spikes when everyone's online at once. For real-time features like live translation, this is the difference between "usable" and "magical."

Privacy by default. When AI runs on the device, sensitive data never leaves the phone. No uploading your conversations, health data, or location history to someone else's server. For industries like healthcare and finance, this isn't just nice to have, it's a compliance requirement.

Offline-first. Your app works in airplane mode, in a tunnel, in a rural area with zero signal. This might not sound exciting if you live in a city with 5G everywhere, but for a huge chunk of the world's mobile users, connectivity is still unreliable.

The use case that excites me most is real-time translation. Imagine traveling abroad and having your phone translate conversations in real-time, no internet required, no data leaving your device. That's not science fiction anymore. That's buildable today with the models and hardware we have right now.

The New App Gold Rush

Here's my prediction: on-device AI is going to trigger a wave of new apps that simply couldn't exist before.

When AI inference required an API call to the cloud, every AI-powered feature came with ongoing server costs, latency tradeoffs, and privacy concerns. That made it hard for indie developers and small teams to build AI-native apps without burning through cash on infrastructure.

On-device AI flips that equation. The "compute" is free, it's already sitting in the user's pocket. A solo developer can build an app with real AI capabilities and the only cost is their time.

I think we're going to see a Cambrian explosion of small, clever apps that use local AI in ways the big companies haven't thought of yet. Translation apps that work offline. Smart journaling apps that analyze your writing patterns locally. Accessibility tools that process visual and audio information in real-time on the device.

The barrier to entry just dropped dramatically, and that's when interesting things happen.

The Catch Nobody's Talking About: Battery and Heat

Okay, here's the part where I pump the brakes a little.

Running AI inference on a mobile chip is computationally expensive. These processors are doing serious math, and serious math generates serious heat. If you've ever noticed your phone getting warm during a long gaming session, imagine that, but for AI features running in the background.

Battery life takes a real hit too. The benchmarks for on-device AI models look great in controlled settings, but in the real world, where users are also running GPS, streaming music, and keeping 47 browser tabs open? It adds up fast.

This is the tradeoff that the "on-device AI will replace cloud AI" crowd tends to skip over. Yes, you save on server costs. Yes, you get speed and privacy. But you're borrowing compute from a device that the user also needs to last until bedtime.

For developers, this means being smart about when and how you run on-device inference. Not everything needs to be processed locally in real-time. Sometimes the right call is a hybrid approach: handle the quick, privacy-sensitive stuff on-device and offload the heavy lifting to the cloud when the user is on Wi-Fi and plugged in.

What This Means If You're Building Mobile Apps

Whether you're a founder scoping your next product or a developer evaluating what to build with, here's how I'd think about it:

Start with the use case, not the tech. Don't add on-device AI because it's trendy. Figure out where local inference actually makes your app better (faster, more private, works offline) and focus there.

Test on real devices, not just flagships. On-device AI works great on the latest iPhone or Pixel. But what about a mid-range Android phone from two years ago? Device fragmentation is real, and only flagship chips handle these models smoothly right now.

Monitor battery and thermal impact. Your users will notice if your app drains their battery or makes their phone hot. Profile your AI features aggressively and give users control over when they run.

Consider the hybrid model. On-device for latency-sensitive and privacy-critical tasks, cloud for everything else. This gives you the best of both worlds without cooking anyone's phone.

Where This Is Headed

On-device AI is real, it's here, and it's going to reshape how mobile apps get built. The models are getting smaller and more capable every quarter. The hardware is getting better. The tooling (Core ML, TensorFlow Lite, ExecuTorch) is maturing fast.

But we're still early. The developers and companies who start experimenting now, understanding the tradeoffs, learning what works on-device versus in the cloud, they're the ones who'll be ahead when this wave really breaks.

The gold rush is starting. Just make sure you bring a charger.

Building a mobile app and wondering where on-device AI fits in? Solac Labs helps teams design, build, and ship mobile apps that actually work in the real world. Let's chat.

Sources

The NPU Thermal Wall: Why 2026 AI Features are Physically Aging Your Phone's Motherboard

Fan-on-a-chip could prevent AI smartphones from overheating (Live Science)

Pixel 9 Pro overheating and battery drain (Google Community)

The silent killer of your phone's performance: thermal throttling (XDA)

On-Device LLMs in 2026: What Changed, What Matters, What's Next (Edge AI and Vision Alliance)

Edge Computing for Mobile: Beat 2026 Cloud Costs (Dogtown Media)

Comments (0)

No comments yet. Be the first to share your thoughts!

Related Posts

Low-Code Hits 75%: Is the Era of "Everyone's a Developer" Finally Here?

Low-code and no-code platforms (think Bubble, FlutterFlow, Retool, Lovable) let you build software using visual interfaces instead of writing code line by line. Drag a button here, connect a database there, set up some logic, and you've got a working app.

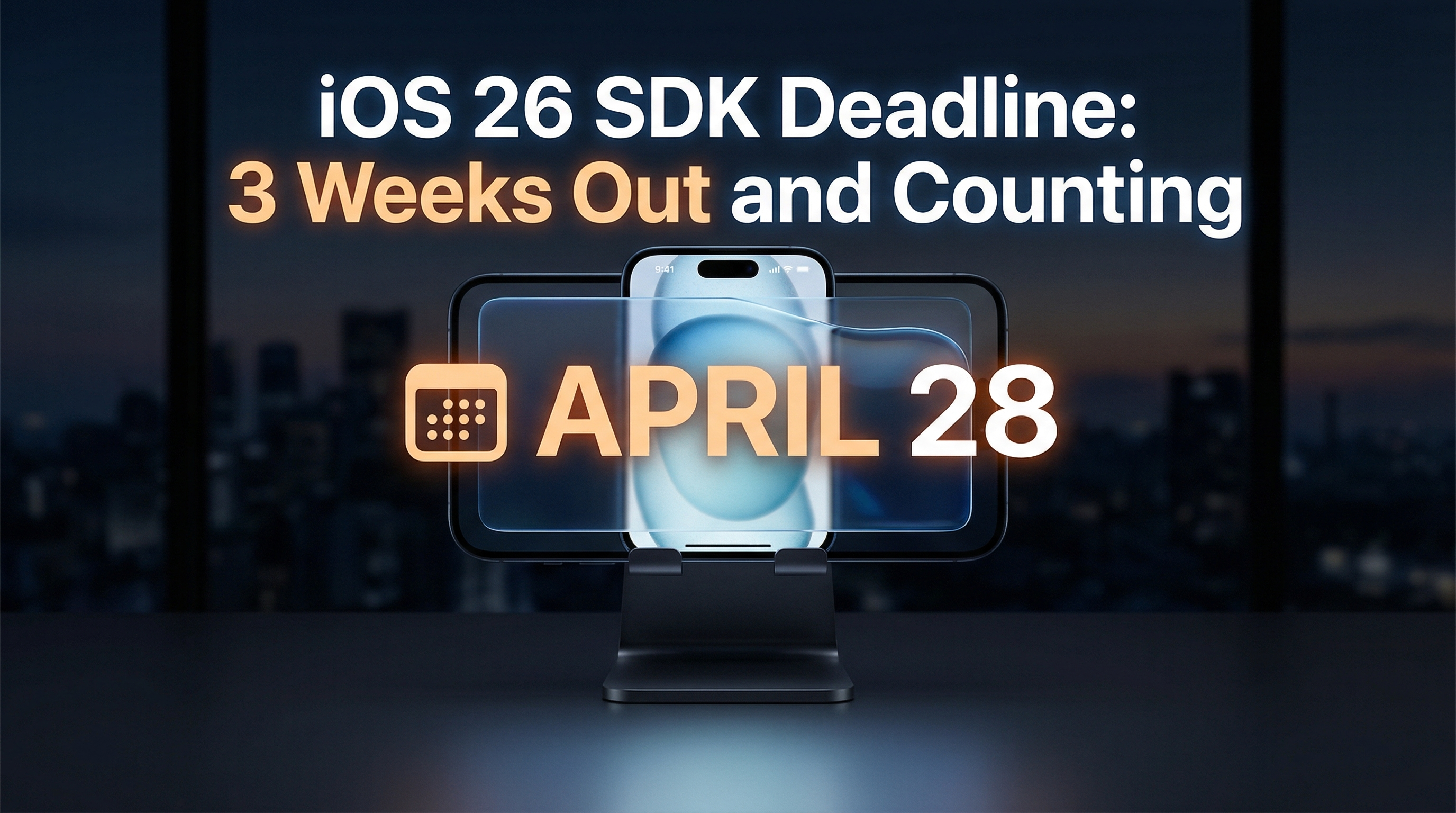

iOS 26 SDK Deadline: 3 Weeks Out and Counting

If you're building a mobile app (or running a company that has one), here's your friendly heads-up: April 28, 2026 is the cutoff. After that date, every app submitted to the App Store needs to be built with the iOS 26 SDK. No exceptions, no extensions, no "but we're almost done." I've been keeping

The Rise of Agentic AI: Your Software Is Learning to Do Things on Its Own

The rise of agentic AI - just a normal guy's opinion